LLM Adventures: Text2SQL

As inspired by Kevin Jordain, who clued me about the increased demand for using natural questions to analyze client’s data, John Kerski, who did a great presentation to our Atlanta BI Group on integrating Power Query with Azure OpenID, and Kyle Hale, who believes (wrongly 😊) that the Databricks Genie will make Power BI and semantic modeling obsolete, I set on a quest to find how effective Large Language Models (LLM) are in generating SQL from natural questions, also known as Text2SQL. What a better way to spend some free time around the 4th of July holiday, right?

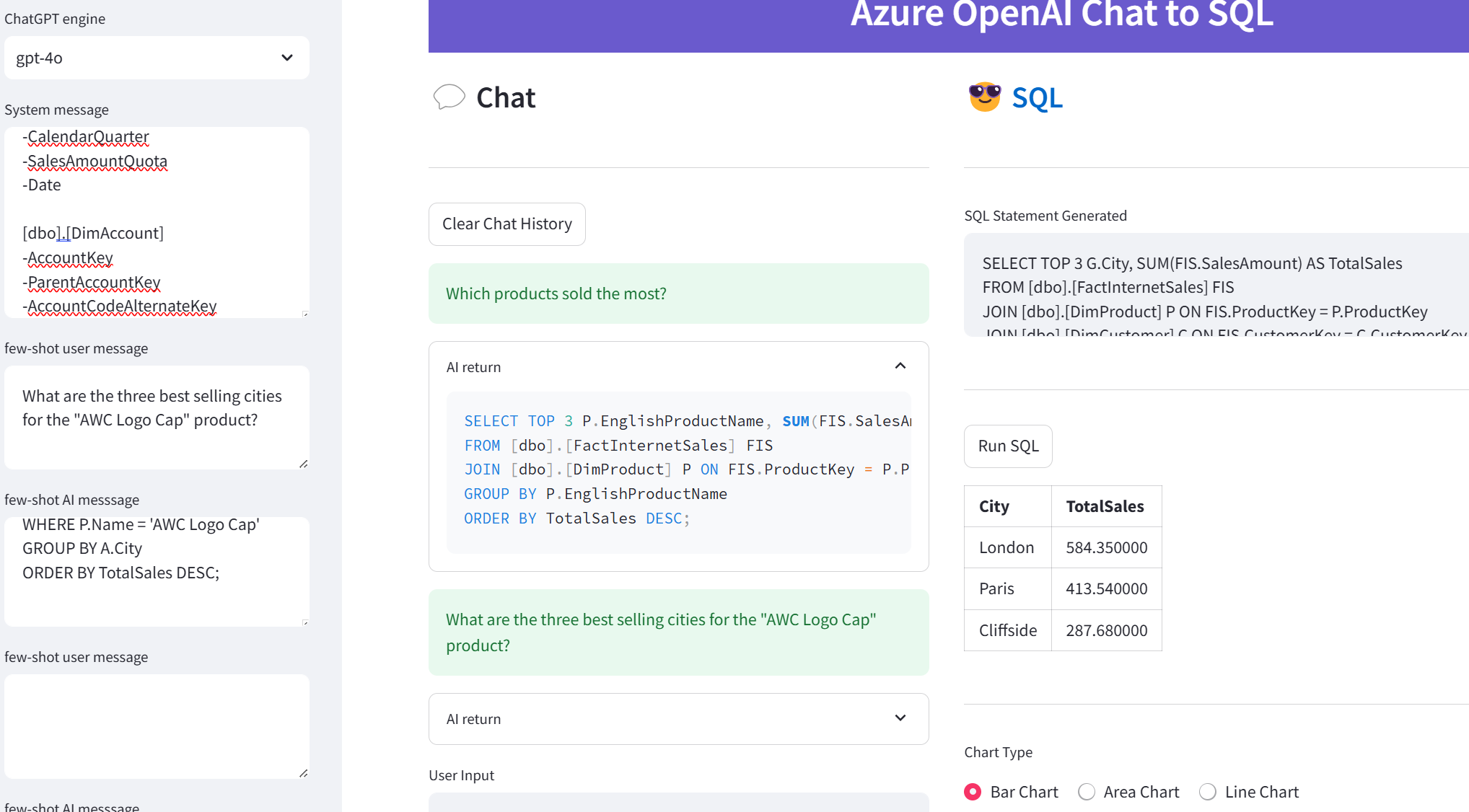

I have to say that I was impressed with LLM. I used the excellent Ric Zhou’s Text2SQL sample as a starting point inside Visual Studio Code. The sample uses the Python streamlit framework to create a web app that submits natural questions to Azure OpenAI.

My humble contributions were:

- I switched to the Azure OpenAI chatgpt-4o model.

- Because the Python openai module has deprecated ChatCompletion, I transitioned the code to use the new way of interacting, as I explained in the related GitHub issue.

- I used the AdventureWorksDW2019 database which I deployed to Azure SQL Database.

I was amazed how simple the LLM input was. Given that it’s trained with many popular languages, including SQL, all you have to do is provide some context, database schema (generated in a simple format by a provided tool), and a few prompts:

[{'role': 'system', 'content': 'You are smart SQL expert who can do text to SQL, following is the Azure SQL database data model <database schema>},

{'role': 'user', 'content': 'What are the three best selling cities for the "AWC Logo Cap" product?'},

{'role': 'assistant', 'content': 'SELECT TOP 3 A.City, sum(SOD.LineTotal) AS TotalSales \\nFROM [SalesLT].[SalesOrderDe...BY A.City \\nORDER BY TotalSales DESC; \\n'},

{'role': 'user', 'content': natural question here'}]Let’s decipher this:

- The first prompt provides context to the model for our intention to act as a SQL expert.

- The sample includes a tool that generates the database schema consisting of table and columns in the following format below. Noticed that referential integrity constraints are not included (the model doesn’t need to know how the tables are related!) There is nothing special in the syntax. It is fluid (impressive!), which is a paradigm shift from structured XML or JSON where missing a bracket crashes the system. So, I could have expressed the schema using the following notation and it would still work (ideally, we should also provide the column data types):

Table: FactSalesQuota

– SalesQuotaKey

– other columnsor

Table: FactSalesQuota

Columns: column1, column2or

CREATE TABLE (DML here)

<Table definition> [dbo].[FactSalesQuota] -SalesQuotaKey -EmployeeKey -DateKey -CalendarYear -CalendarQuarter -SalesAmountQuota -Date [dbo].[DimAccount] -AccountKey -…

- The next prompt assumes the role of an end user who will ask a natural question, such as ‘What are the three best selling cities for the “AWC Logo Cap” product?’

- The next prompt assumes the role of an assistant who hints the model what the correct query should be.

- The final prompt acts as a user who now provides what natural question you enter.

The sample also supports running the query against the database and visualizing the results as a table, bar chart, area chart, or line chart. Here are some results

- What are the three best selling cities for the “AWC Logo Cap” product?

SELECT TOP 3 G.City, SUM(FIS.SalesAmount) AS TotalSales FROM [dbo].[FactInternetSales] FIS JOIN [dbo].[DimProduct] P ON FIS.ProductKey = P.ProductKey JOIN [dbo].[DimCustomer] C ON FIS.CustomerKey = C.CustomerKey JOIN [dbo].[DimGeography] G ON C.GeographyKey = G.GeographyKey WHERE P.EnglishProductName = 'AWC Logo Cap' GROUP BY G.City ORDER BY TotalSales DESC;

- Which products sold the most?

SELECT P.EnglishProductName, SUM(FIS.SalesAmount) AS TotalSales FROM [dbo].[FactInternetSales] FIS JOIN [dbo].[DimProduct] P ON FIS.ProductKey = P.ProductKey GROUP BY P.EnglishProductName ORDER BY TotalSales DESC;

- Which customers bought something in 2012 but didn’t buy anything in 2011?

SELECT DISTINCT C.CustomerKey, C.FirstName, C.LastName FROM [dbo].[FactInternetSales] FIS2012 JOIN [dbo].[DimCustomer] C ON FIS2012.CustomerKey = C.CustomerKey WHERE FIS2012.OrderDateKey BETWEEN 20120101 AND 20121231 AND C.CustomerKey NOT IN ( SELECT DISTINCT FIS2011.CustomerKey FROM [dbo].[FactInternetSales] FIS2011 WHERE FIS2011.OrderDateKey BETWEEN 20110101 AND 20111231);

All SQL statements were syntactically and logically correct. Pretty amazing, right? Especially considering that the model had to figure out how the tables related, such as that DimCustomer is related to FactInternetSales but not to FactResellerSales.

In summary, it appears that LLM can be used effectively for generating SQL from natural text. This is opening new possibilities for ad hoc data analytics and for assisting users with learning or generating code. I believe that simplified schema is very important, such as star schema. This underscores the importance of schema design for data analytics, something that AI can’t do (yet).

What about Text2DAX? Can LLM do it? You bet! DAX happens to be one of the programming languages used for training, so stay tuned for the next blog post where I’ll present you with a poor man’s DAX copilot.