Prologika Newsletter Fall 2024

When it comes to Generative AI and Large Language Models (LLMs), most people fall into two categories. The first is alarmists. These people are concerned about the negative connotations of indiscriminate usage of AI, such as losing their jobs or military weapons for mass annihilation. The second category are deniers, and I must admit I was one of them. When Generative AI came out, I dismissed it as vendor propaganda, like Big Data, auto-generative BI tools, lakehouses, ML, and the like. But the more I learn and use Generative AI, the more credit I believe it deserves. Because LLMs are trained with human and programming languages, one natural case where they could be helpful are code copilots, which is the focus of this newsletter. Let’s give Generative AI some credit!

When it comes to Generative AI and Large Language Models (LLMs), most people fall into two categories. The first is alarmists. These people are concerned about the negative connotations of indiscriminate usage of AI, such as losing their jobs or military weapons for mass annihilation. The second category are deniers, and I must admit I was one of them. When Generative AI came out, I dismissed it as vendor propaganda, like Big Data, auto-generative BI tools, lakehouses, ML, and the like. But the more I learn and use Generative AI, the more credit I believe it deserves. Because LLMs are trained with human and programming languages, one natural case where they could be helpful are code copilots, which is the focus of this newsletter. Let’s give Generative AI some credit!

Text2SQL

I have to say that I was impressed with LLM. I used the excellent Ric Zhou’s Text2SQL sample as a starting point inside Visual Studio Code.

The sample uses the Python streamlit framework to create a web app that submits natural questions to Azure OpenAI. I was amazed how simple the LLM input was. Given that it’s trained with many popular languages, including SQL, all you have to do is provide some context, database schema (generated in a simple format by a provided tool), and a few prompts:

[{'role': 'system', 'content': 'You are smart SQL expert who can do text to SQL, following is the Azure SQL database data model <database schema>},

{'role': 'user', 'content': 'What are the three best selling cities for the "AWC Logo Cap" product?'},

{'role': 'assistant', 'content': 'SELECT TOP 3 A.City, sum(SOD.LineTotal) AS TotalSales \\nFROM [SalesLT].[SalesOrderDe...BY A.City \\nORDER BY TotalSales DESC; \\n'},

{'role': 'user', 'content': natural question here'}]Let’s drill into these prompts.

- The first prompt is an example of role prompting which provides context to the model for our intention to act as a SQL expert.

- The sample includes a tool that generates the database schema consisting of table and columns in the following format below. Notice that referential integrity constraints are not included (the model doesn’t need to know how the tables are related!)

- The next “few shot” prompt assumes the role of an end user who will ask a natural question, such as ‘What are the three best selling cities for the “AWC Logo Cap” product? This is followed by the assistant’s response who hints the model what the correct query should be.

- Then the request for new query follows.

You can find more Text2SQL implementation details in my post “LLM Adventures: Text2SQL”.

Text2DAX

As a Microsoft BI practitioner, the next natural stop was Text2DAX. But wait, we have a Microsoft Fabric Copilot already for this, right? Yes, but what happens when you click the magic button in PBI Desktop? You are greeted that you need to purchase F64 or larger capacity. It’s a shame that Microsoft has decided that AI should be a super-premium feature. Given this horrible predicament, what would an innovative developer strapped for cash do? Create their own copilot of course!

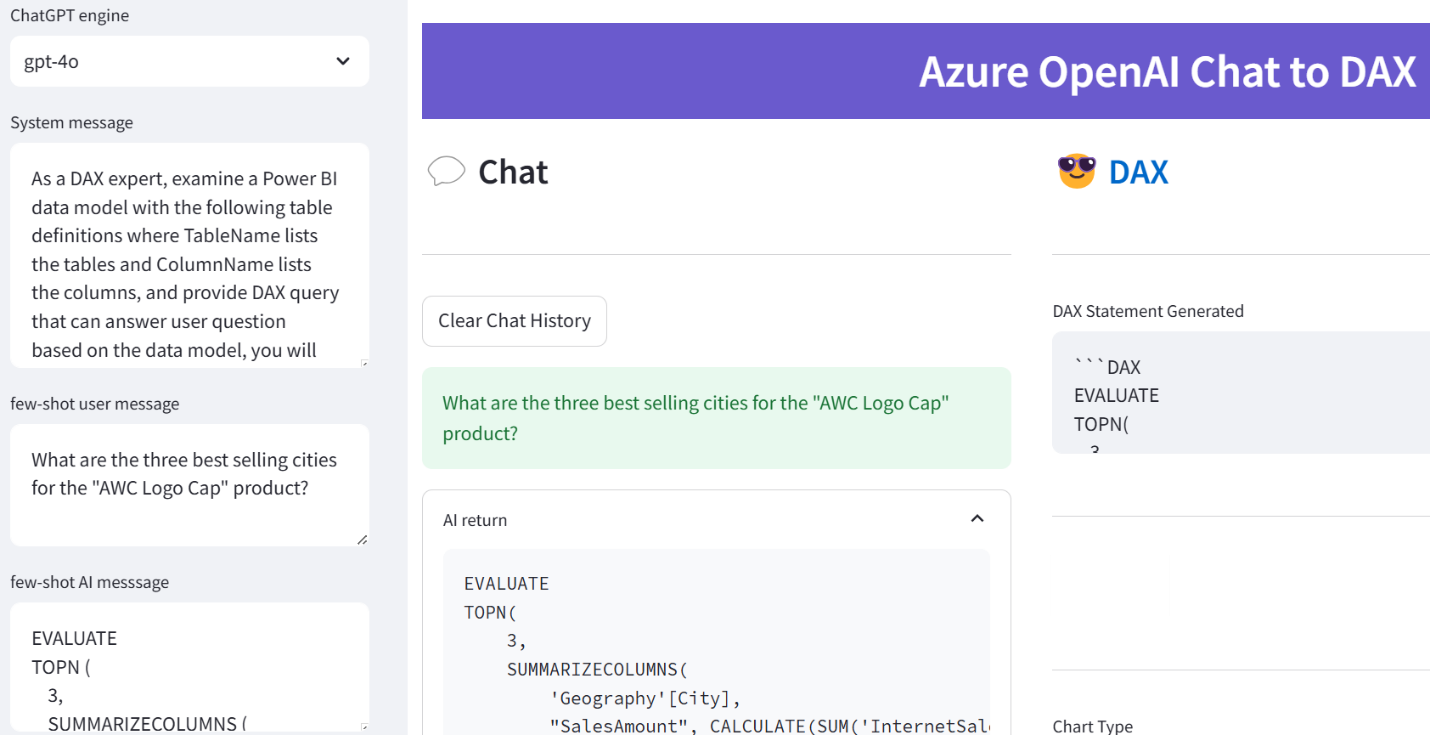

Building upon the previous sample, this is remarkably simple. First, I obtained the model schema using Analysis Services Data Management Views (DMVs). Second, I changed slightly the prompt, along these lines:

“As a DAX expert, examine a Power BI data model with the following table definitions where TableName lists the tables and ColumnName lists the columns, and provide DAX query that can answer user questions based on the data model, you will think step by step throughout and return DAX statement directly without any additional explanation.”

You can find more Text2DAX implementation details in my post “LLM Adventures: Text2DAX”.

In summary, it appears that LLM can effectively assist us in writing code. The emphasis is on assist because I view the LLM role as a second set of eyes. Hey, what do you think about this problem I’m trying to solve here? LLM doesn’t absolve us from doing our homework and learning the fundamentals, nor it can compensate for improper design. While LLM might not always generate the optimum code and might sometimes fabricate, it can definitely assist you in creating business calculations, generating test queries, and learning along the way.

BTW, you can use any of the publicly available LLM apps, such as Copilot, ChatGPT, Google Gemini or Perplexity (you don’t need the sample app I’ve demonstrated) for Text2SQL and Text2DAX and probably you will obtain similar results if you give it the right prompts. I took this approach because I was interested in automating the process for business users.

Teo Lachev

Prologika, LLC | Making Sense of Data