Atlanta MS BI and Power BI Group Meeting on February 1st

Please join us online for the next Atlanta MS BI and Power BI Group meeting on Monday, February 1st, at 6:30 PM. Paul Turley (MVP) will show you how to use Power Query to shape and transform data. For more details, visit our group page.

| Presentation: | Preparing, shaping & transforming Power BI source data |

| Date: | February 1st, 2021 |

| Time | 6:30 – 8:30 PM ET |

| Place: | Click here to join the meeting |

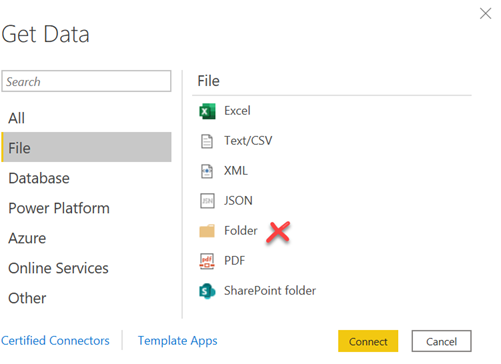

| Overview: | In a business intelligence solution, data must be shaped and transformed. Your source data is rarely, if ever, going to be in the right format for analytic reporting. It may need to be consolidated into related fact and dimension tables, summarized, grouped or just cleaned-up before tables can be imported into a data model for reporting. · Where should I shape and transform data… At the source? In Power Query, or In the BI data model? · Where and what is Power Query? Understand how to get the most from this amazing tool and how to use it most efficiently in your environment. · Understand Query Folding and how this affects the way you prepare, connect and interact with your data sources – whether using files, unstructured storage, native SQL, views or stored procedures. · Learn to use parameters to manage connections and make your solution portable. Tune and organize queries for efficiency and to make them maintainable. |

| Speaker: | Paul (Blog | LinkedIn | Twitter) is a Principal Consultant for 3Cloud Solutions (formerly Pragmatic Works), a Mentor and Microsoft Data Platform MVP. He consults, writes, speaks, teaches & blogs about business intelligence and reporting solutions. He works with companies around the world to model data, visualize and deliver critical information to make informed business decisions; using the Microsoft data platform and business analytics tools. He is a Director of the Oregon Data Community PASS chapter & user group, the author and lead author of Professional SQL Server 2016 Reporting Services and 14 other titles from Wrox & Microsoft Press. He holds several certifications including MCSE for the Data Platform and BI. |

| Prototypes without pizza: | Power BI Latest |