Atlanta Microsoft BI Group Meeting on March 4th (Navigating Microsoft Fabric – Choosing the Right Workload for Your Needs)

Atlanta BI fans, please join us in person for the next meeting on Monday, March 4th at 6:30 PM ET. The famous Patrick LeBlanc (Guy in the Cube) will take a deep dive into the Microsoft Fabric ecosystem, from Lakehouse to Warehouses and Power BI, ensuring you can make informed decisions about your data processing needs. Your humble correspondent will help you catch up on Microsoft BI latest. CloudStaff.ai will sponsor the event. For more details and sign up, visit our group page.

Presentation: Navigating Microsoft Fabric – Choosing the Right Workload for Your Needs

Delivery: In-person

Date: March 4

Time: 18:30 – 20:30 ET

Level: Beginner/Intermediate

Food: Pizza and drinks

Agenda:

18:15-18:30 Registration and networking

18:30-19:00 Organizer and sponsor time (events, Microsoft BI latest, sponsor marketing)

19:00-20:15 Main presentation

20:15-20:30 Q&A

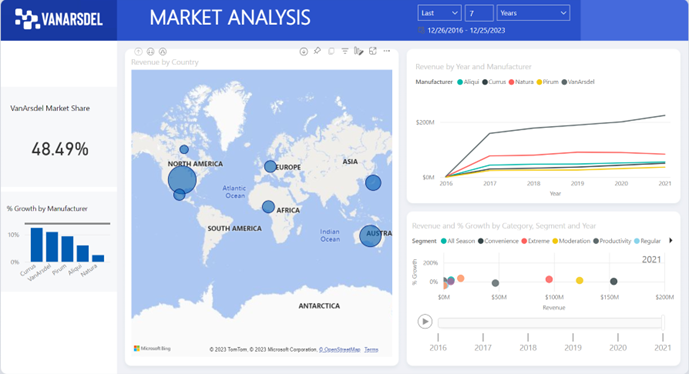

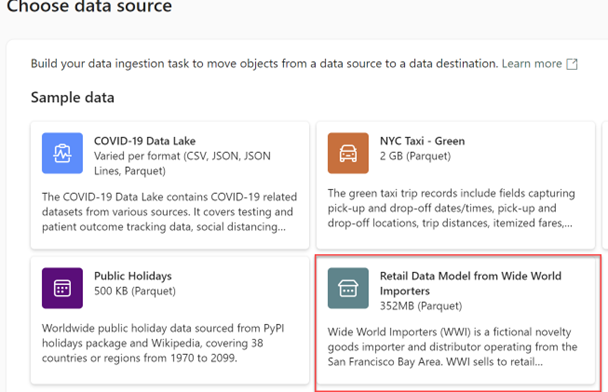

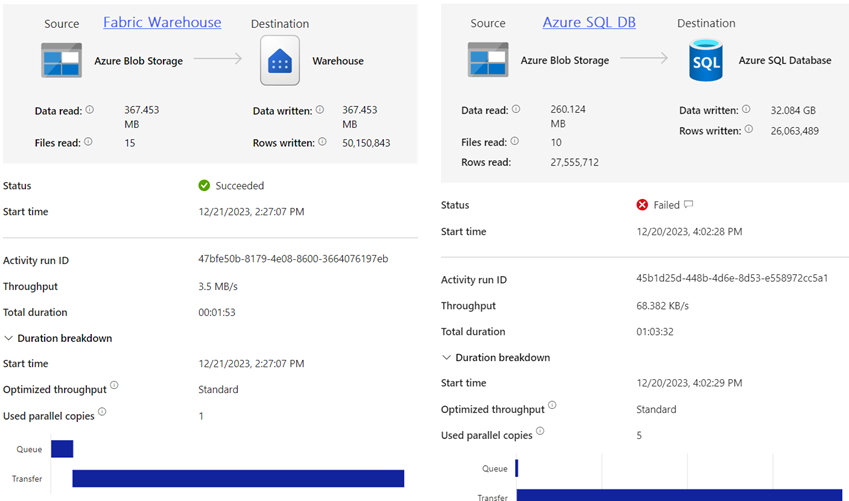

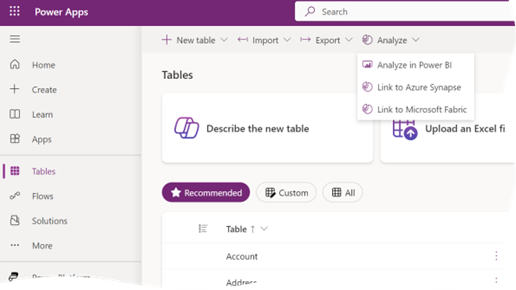

Overview: As businesses transition to the cloud and leverage advanced analytics, understanding the nuances of data infrastructure becomes paramount. Microsoft Fabric offers a suite of powerful tools designed to handle various data workloads, but the key to harnessing its full potential lies in understanding which tool to use and when. This session provides a deep dive into the Microsoft Fabric ecosystem, from Lakehouse to Warehouses and Power BI, ensuring that participants can make informed decisions about their data processing needs. We’ll also look at current limitations that will help guide you.

Speaker: Patrick LeBlanc is a currently a Principal Program Manager at Microsoft and a contributing partner to Guy in a Cube. Along with his 15+ years’ experience in IT he holds a Master of Science degree from Louisiana State University. He is the author and co-author of five SQL Server books. Prior to joining Microsoft, he was awarded Microsoft MVP award for his contributions to the community. Patrick is a regular speaker at many SQL Server Conferences and Community events.

Sponsor: CloudStaff.ai