Correlating Analysis Services Errors with Measures

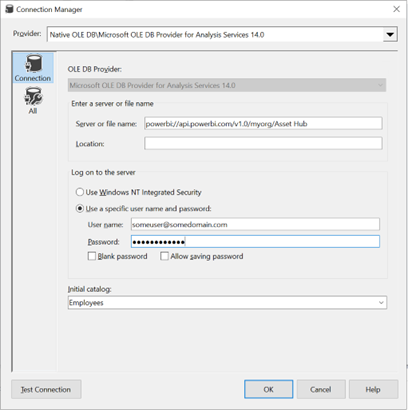

This blog builds upon my previous “Resolving Tabular Conversion Errors” and applies to Analysis Services in all flavors (Power BI, MD, and Tabular). In the scenario I described in the previous blog, the server at least told us the name of the offending measure in the error description. But sometimes you might not be that lucky. For example, recently I got this error when running a DAX query requesting many measures: “Microsoft OLE DB Provider for Analysis Services.” Hresult: 0x80004005 Description: “MdxScript(Model) (2000, 133) Failed to resolve name ‘SYNTAXERROR’. It is not a valid table, variable, or function name.” All we know is that there is a syntax error in some measure but good luck finding it if you have hundreds of measures in the query and your model. However, the (2000,133) section references the line number and column number in the MDX script (Yeap, MDX even if you use Tabular), so if we can get the script, we might be able to correlate the error.

Getting that script is elusive as the only way I know off for Tabular models is to trace the “Execute MDX Script Begin” or “Execute MDX Script End” events. But these events are generated only the first time a user with a given set of role permissions connects to a cube after processing, clearing the cache, or restarting the server. So, after you connect the SQL Server Profiler to the cube and check either of these two events (you must click Show All Events because the events are not shown by default), you can execute Clear Cache or process a table. Then, connect to the cube and execute any MDX or DAX query. In the Profiler, locate the “Execute MDX Script Begin” event. The payload should start with CALCULATE followed by all measure definitions.

CALCULATE;

CREATE MEMBER CURRENTCUBE.Measures.[__Default measure] AS 1; ALTER CUBE CURRENTCUBE UPDATE DIMENSION Measures, Default_Member = [__Default measure];

CREATE

MEASURE'Date'[Days Current Quarter to Date]=COUNTROWS( DATESQTD( 'Date'[Date]))

MEASURE'Date'[Days in Current Quarter]=COUNTROWS( DATESBETWEEN( 'Date'[Date], STARTOFQUARTER( LASTDATE('Date'[Date])), ENDOFQUARTER('Date'[Date])))

MEASURE'Internet Sales'[Internet Distinct Count Sales Order]=DISTINCTCOUNT([Sales Order Number])

….

Copy that payload and paste it in an editor that has line numbers, such as Notepad++. Then go to the line number (you can press Ctrl+G in Notepad) mentioned in the error description.

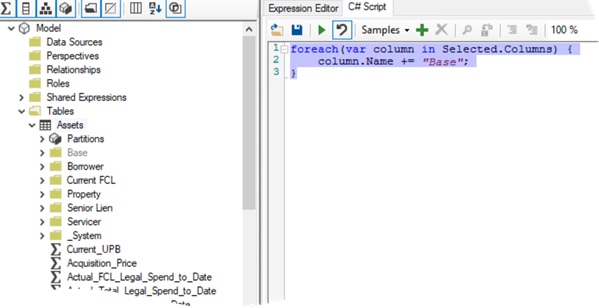

Using this technique, I was able to narrow down the measure and discovered that I’ve missed a comma in the DIVIDE DAX function. I’ve made the change in the Tabular Editor, but it didn’t catch the syntax error.