Atlanta MS BI and Power BI Group Meeting on May 4th

MS BI fans, please join us online for the next Atlanta MS BI and Power BI Group meeting on Monday, May 4th, at 6:30 PM. Bill Anton will show you how to effectively apply time intelligence to your Power BI data models. For more details, visit our group page and don’t forget to RSVP (fill in the RSVP survey if you’re planning to attend).

| Presentation: | Power BI Time Intelligence – Beyond the Basics |

| Date: | May 4th, 2020 |

| Time | 6:30 – 8:30 PM ET |

| Place: | Join Microsoft Teams Meeting

Learn more about Teams | Meeting options Computer audio is recommended Conference bridge number 1 605 475 4300, Access Code: 208547 |

| Overview: | Time-Intelligence refers to analyzing calculations and metrics across time and is the most common type of business intelligence reporting. Power BI has a lot of built in capabilities to help you get started but these alone are not always enough for most real-world solutions.

The key to mastering time-intelligence in Power BI is a good date table and understanding how to manipulate the filter context. This session will teach you how to do both! In this (demo-heavy) session, we’ll quickly review Power BI’s built-in time intelligence capabilities and why you should avoid them! We’ll also cover the importance of a good date table, what attributes it should include, and how it can be leveraged to simplify complex time-intelligence calculations. Finally, we’ll breakdown a handful of the 40+ DAX time-intelligence functions, showing you how they work under the covers (hint: filter context) and how they can used in combination to accommodate complex business logic. |

| Speaker: | Bill Anton is an independent consultant whose primary focus is designing and developing Data Warehouses and Business Intelligence solutions using the Microsoft BI stack. When he’s not working with clients to solve their data-related challenges, he can usually be found answering questions on the MSDN forums, attending PASS meetings, or writing blog posts over at byoBI.com. |

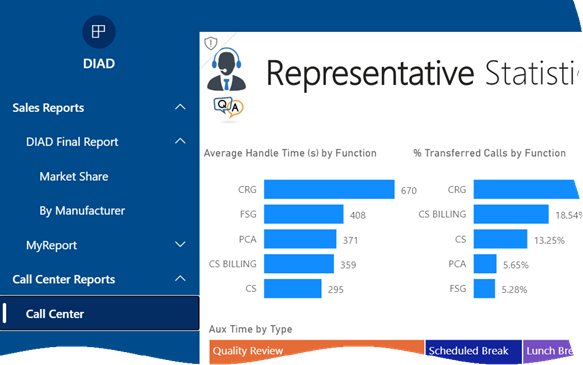

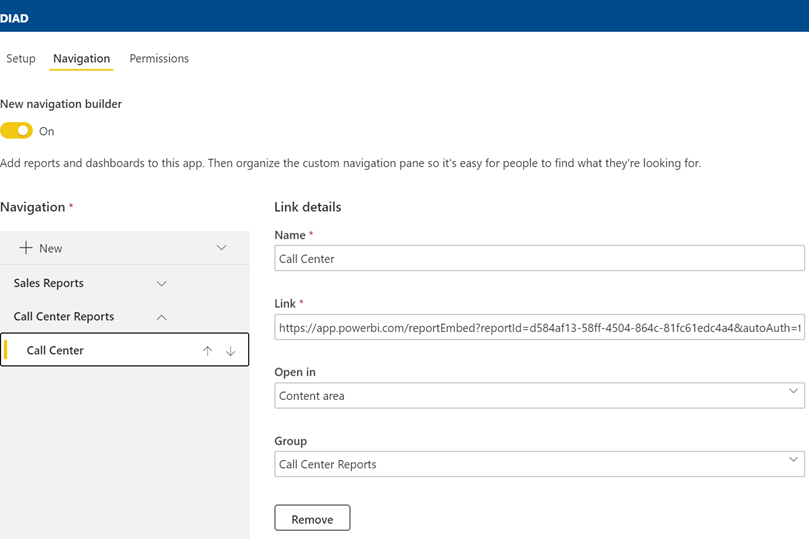

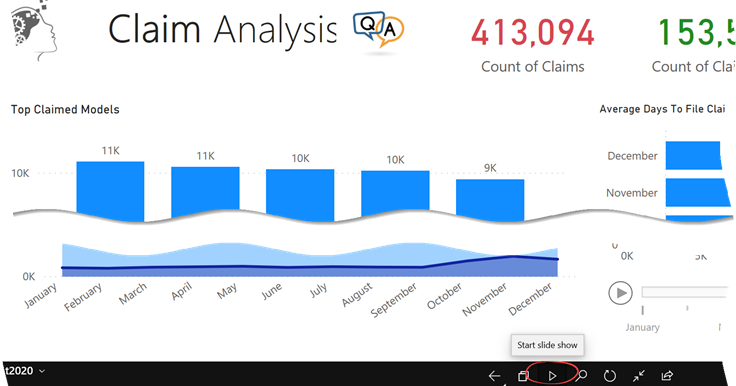

| Prototypes “without” pizza: | Power BI latest features |